With support from the National Security Coordination Secretariat (NSCS), the Centre of Excellence for National Security (CENS) organised the first in-person Distortions, Rumours, Untruths, Misinformation, and Smears (DRUMS) workshop since the pandemic on 2 and 3 November 2022. Titled “Security in a Post Truth World”, the workshop provided a deep dive into the different dimensions of DRUMS.

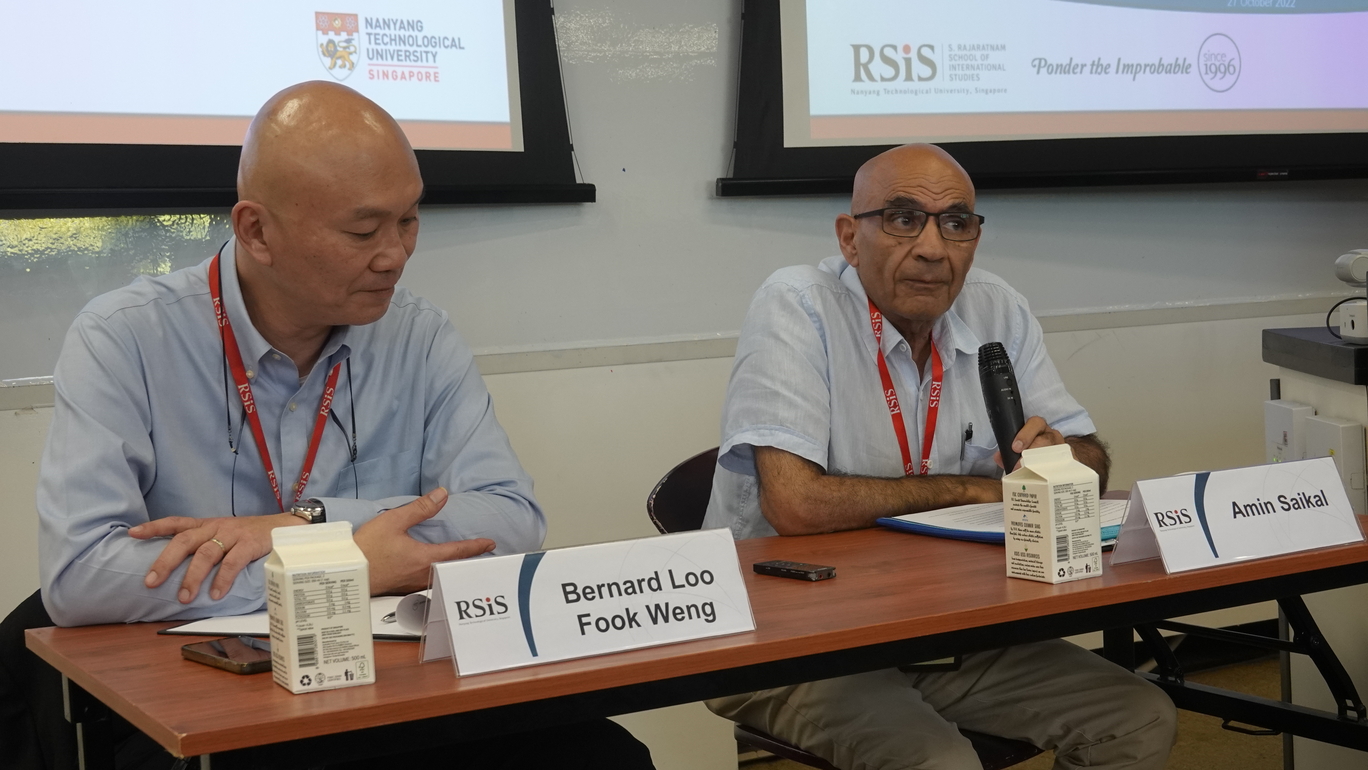

This year’s event aimed to provide an understanding of the challenges posed by a post-truth world where disinformation is endemic. It also explored new methods to mitigate these challenges. Dr Shashi Jayakumar, Senior Fellow and Head of CENS, opened the event with a welcome address, and 18 speakers lent their expertise in six panels, as well as, for the very first time, a special technical presentation.

On day one, the first panel titled “Alternate Spaces in Influence Operations” examined how malicious actors have devised novel and unique methods of spreading disinformation and hate speech on platforms such as WhatsApp and Wikipedia, resulting in ever-evolving forms of influence operations. Building on these discussions was a special technical presentation consisting of a technical demonstration, showing how algorithms in social media can be used to affect the influence of a message, by shaping both the narrative and the community.

The second panel discussed ongoing countermeasures against online misinformation, including the effectiveness and credibility of current fact-checking initiatives. Of particular interest was the development of solutions like critical thinking mobile games, which offer an interactive and technology-driven incentive to players to build resilience against misinformation. At the third panel, experts from Thailand, Indonesia, and Australia discussed the regulatory approaches to social media platforms in their respective countries, and the associated challenges.

Day two began with a panel on “Strategic Communication and Alternative Media Channels”, which explored how rapid growth in global Internet adoption and advances in Internet communications technology have magnified the scale and spread of disinformation. It also outlined innovative and strategic communication approaches to the problem, incorporating AI, edutainment, and social media literacy. The next panel featured speakers from Article19, NewsGuard, and Meta, who discussed the creation of a resilient and sustainable information ecosystem. Identifying misinformation as a moving target, the panellists emphasised the long-term importance of giving users the necessary tools to empower their own online decision-making, as opposed to unenforceable policies that simply prohibit “misinformation”.

The workshop’s final panel dealt with “Identity and Online Harms” against the surge of Online Gender-Based Violence (OGBV) during the pandemic. Panellists focused on violent extremism and misogyny, and underlined the urgent need to better understand the gendered insecurities created by online harassment and disinformation. The panel also identified the absence of appropriate laws and regulations as one of the main challenges in addressing OGBV, and discussed how future laws and regulations may be designed to protect OGBV victims.

To allow participants to engage with these topics and interact with the panellists, the workshop also featured Q&A sessions and syndicate room discussions. The key takeaways from this workshop were extremely valuable for Singapore as it continues to confront the challenges posed by online misinformation. These challenges were deeply exacerbated during the global pandemic, where the increased use of the Internet and social media platforms led to a growing engagement with increasingly extremist content, resulting in violent real-world implications in Asia Pacific and beyond. This workshop provided a valuable opportunity for policymakers, practitioners, and the academic community in Singapore to examine more closely the impact of malicious online activity, as well as various perspectives on how these effects may be successfully mitigated in the future.